Ian Gibbins

FirstEDA

Ian Gibbins

FirstEDA

My family and friends often remark on the consistent success I enjoy with holidays and travel

—

Most of them know by now that it isn’t just down to luck and they enjoy teasing me about the laser like precision research, the military style planning and meticulous attention to detail. Their jibes of ‘Has it got the view you want?’, ‘Have you booked early enough to get the leg room?’, ‘Have you got somewhere to smoke a cigar?’ are met with a wry smile, as I know they have all employed my techniques and enjoyed the benefits of a methodology.

Why am I telling you this you may ask?

Any type of success will benefit from some form of research and planning. In the case of my holidays and travel, it is about the end goal – a holiday destination and using the tools available such as Google and Trip Advisor to aid the research and planning. If the research and planning isn’t started early enough then the view won’t be as good, all the good seats are already booked and not much chance of a cigar!

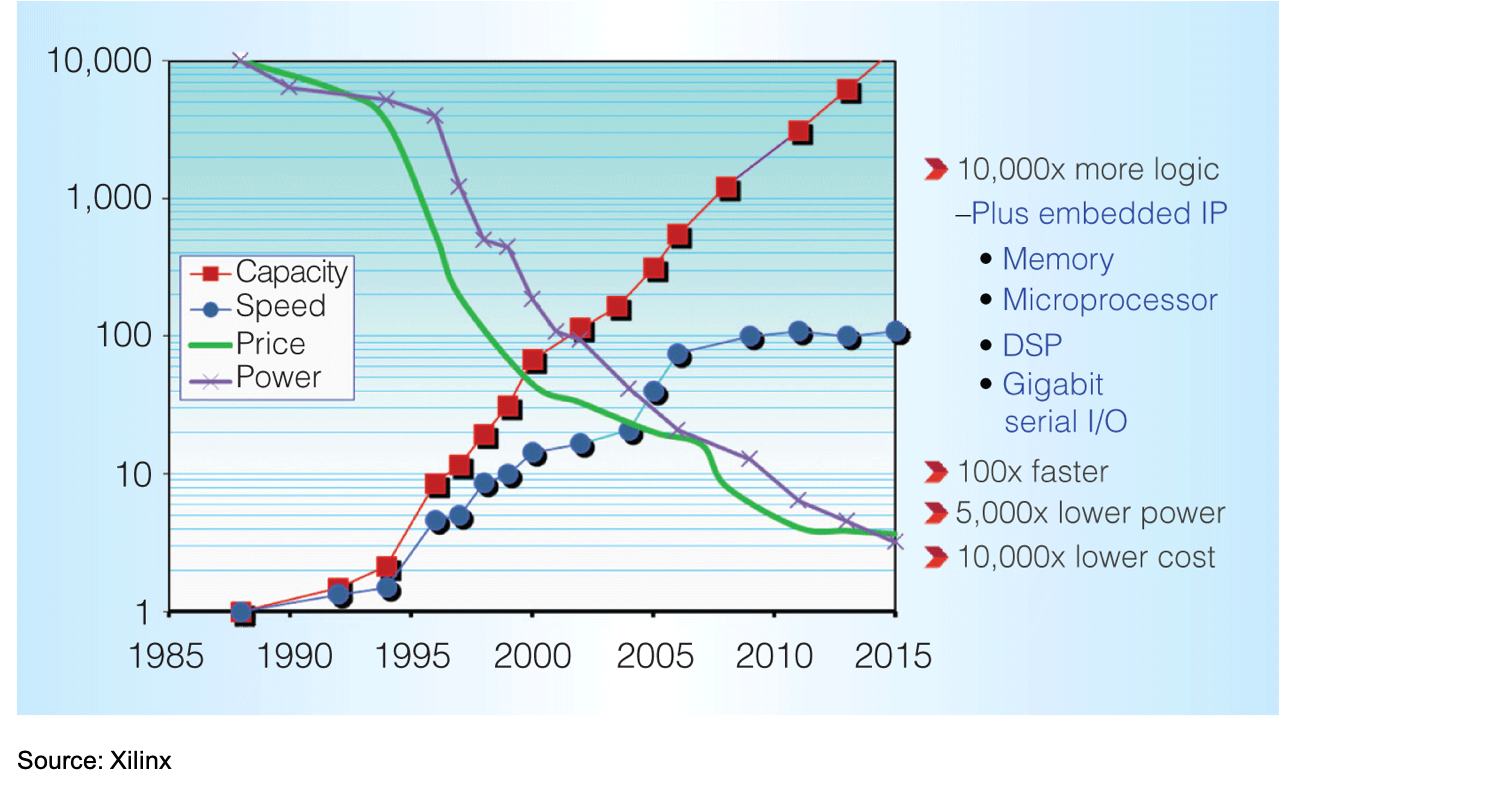

It is well understood that FPGA complexity has risen substantially over the years to the point that verification strategies are approaching that of the ASIC world.

Figure 1 – FPGA Complexity Over Time

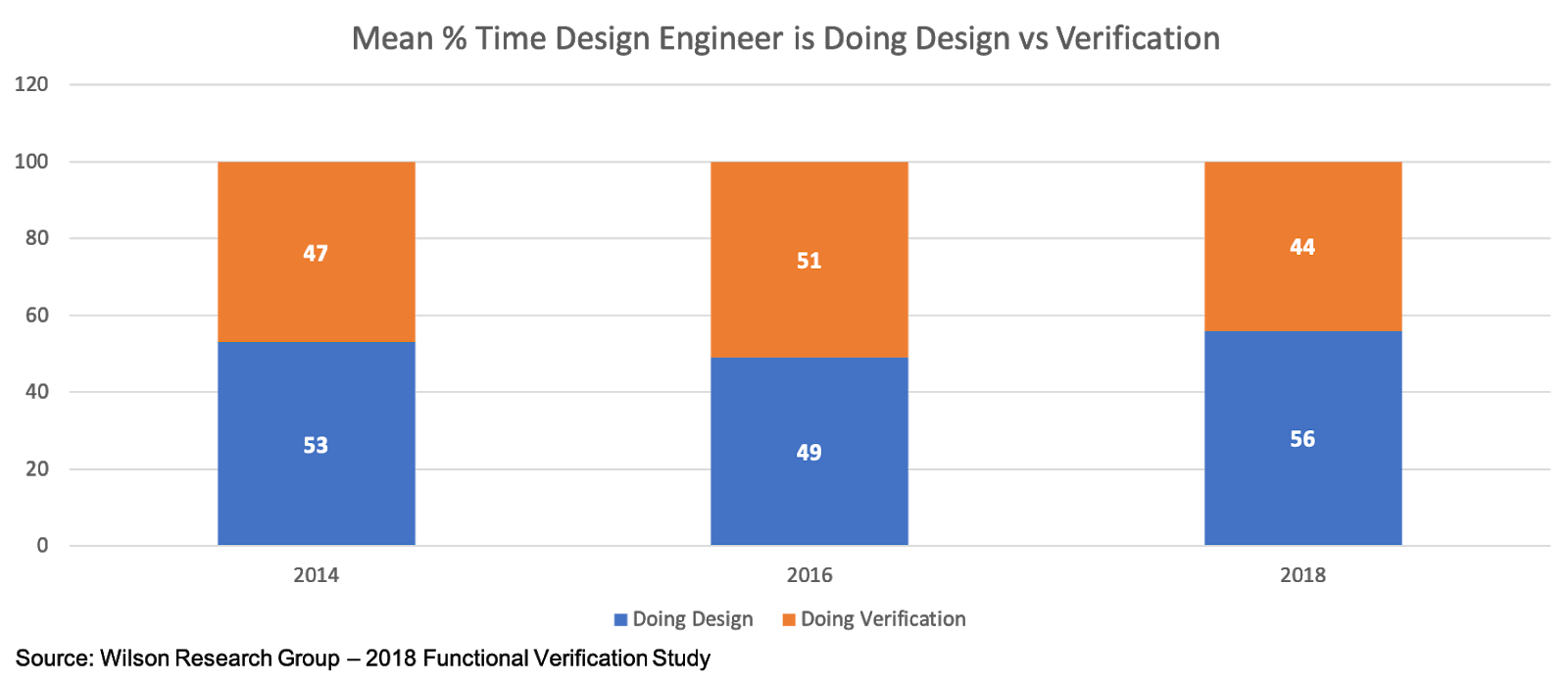

The rise in FPGA complexity has substantially increased the verification burden upon designers. FPGA design teams are typically employing verification engineers, specifically dedicated to verification and allowing the designer to focus as much as possible on the design. A recent functional verification study by Wilson Research Group suggests that this decision, plus the increased adoption of larger more complex FPGAs (which has increased the design engineer’s workload), indicates that designers are becoming less involved in verification tasks.

Figure 2 – Time That Design Engineer is Designing vs Verifying

This is encouraging news for designers, although a fair proportion of their time is still dedicated to verification.

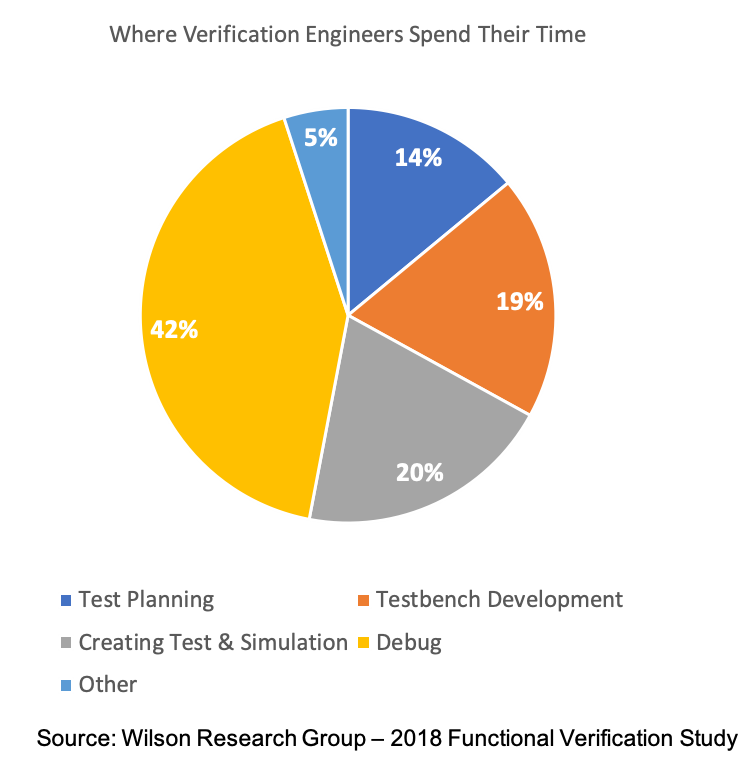

And what are the verification engineers up to?

The same functional verification study by Wilson Research Group found that verification engineers spend almost half their time debugging!

Figure 3 – Where Verification Engineers Spend Their Time

This poses a threat to project planning and execution from a management point of view since debugging is unpredictable and significantly different for every project. The later a bug is found in the project, the longer it takes to fix. This is a known certainty; a universal constant and we do not need a graph to remind us of the exponential time increase!

Just like planning my next holiday, the key is to start as early as possible and look for the tools that are going to help the end goal: to tame the uncertainty and unpredictability of debugging.

During my ASIC design days, the earliest that any real debugging could take place was once a module was ready for simulation, which would have included some stimulus. At this point there were usually some code issues to sort in order to get through the compiler before simulation could take place. Getting to this point could take a few weeks depending upon the module complexity, then a further couple of months before the whole design could be simulated, which was the point where the main debugging would take place (pre-synthesis). Extrapolating those metrics from circa 1996 to current complexity would yield serious threats to project timescales, if tools earlier in the design methodology were not available.

The starting point for any design is putting code to editor, to eagerly begin crafting the functionality. A turbo charged editor exists, which can begin the verification journey from the first code entry.

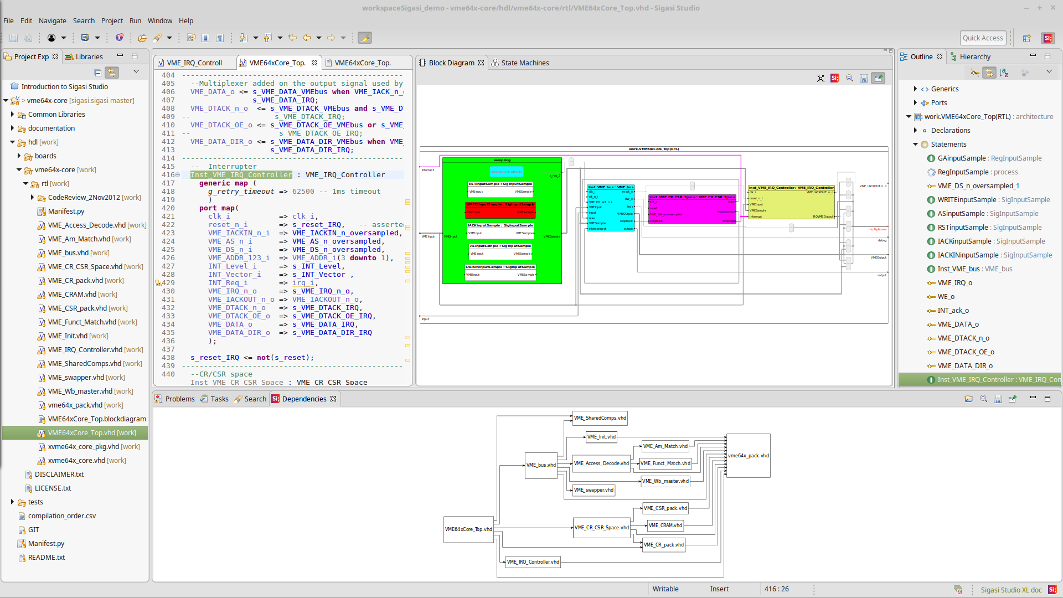

Sigasi Studio is a very sophisticated code editor, with an underlying compiler technology that checks code as it is typed and gives instant feedback on errors and potential issues – all pre-simulation. Also, the context autocomplete feature offers the most relevant autocomplete function depending upon where you are in your code. All this helps by allowing focus to be on the design, rather than language and tools.

But it doesn’t stop there … Sigasi also has built in graphic capabilities to generate state machine and block diagrams allowing the graphical perspective of the design to be realised. The graphical tools are linked to the source code for ease of cross navigation which is great for assisting early architectural decisions pre-simulation.

Figure 4 – Sigasi Studio-XL

Documentation is paramount in any design project and Sigasi offers a documentation generator to create a PDF with all the relevant information of the project, ensuring that the design documentation can be kept up to date.

So, I can be happy my code is in good hands and will be simulator compiler friendly by the time I get to simulate, but the cigar is still in the case since there is more fabric to be stitched into this methodology tapestry!

Since the early days of synthesis, other uses of the ‘quick synthesis’ (the first stage where the RTL is realised into hierarchy and modules prior to targeting) have emerged, including Aldec’s sophisticated Design Rule Checker, ALINT-PRO.

Harking back to my ASIC days, I recall sitting in a smoke filled (can you believe it now?!) meeting room for many hours with colleagues, as we pored over each other’s code to ensure it followed the internal coding guidelines. The coding guidelines document was fairly hefty and a well-thumbed copy lived on every engineers desk. We had to know it as well as an actor knew their script and not deviate from it unless we had good reason … and signed permission! As you can imagine, coding reviews took hours, days and weeks (along with plenty of passive smoking opportunities).

ALINT-PRO emulates coding reviews in a short space of time and without the smoke! It achieves this with a combination of fast synthesis technology and design rule policies.

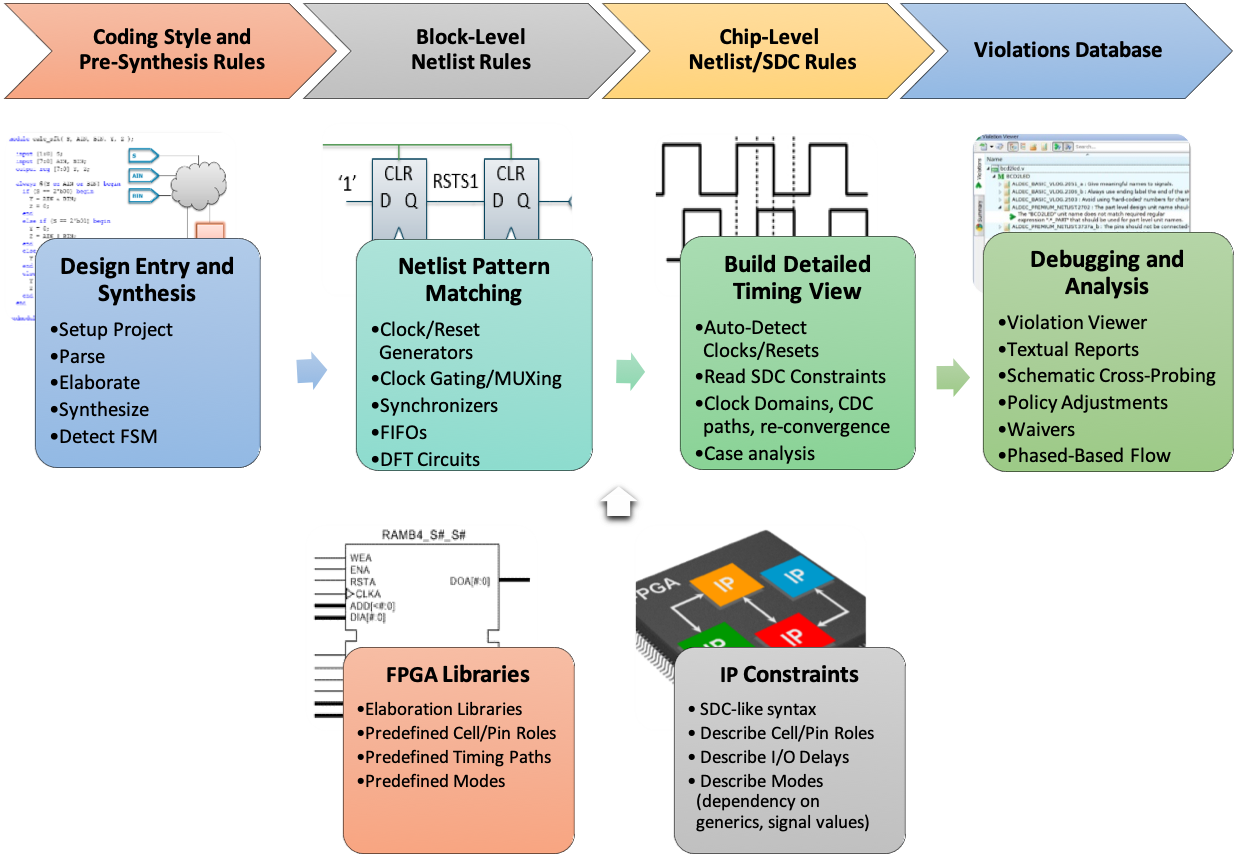

This Design Rule Checking (or linting as it is sometimes referred to) is a process of static HDL code analysis which can uncover bugs hidden in the code. The design is analysed in phases; a compilation stage will look at coding style and pre-synthesis rules and the fast synthesis allows for block and chip level rules.

There are various rule deck options including STARC, CDC and DO-254 to name a few, each of which can be configured (and combined if required) to suit my coding best practices.

Figure 5 – Aldec ALINT-PRO DRC Flow

The DRC engine also detects clocks and resets which allows for clock domain crossing and reset violation analysis. Synthesis constraints can also be read to analyse at the block and chip levels, as Xilinx, Intel (Altera), Microsemi (Actel) and Lattice FPGA libraries are all understood. The violation reporting can post HTML which can be very useful for design teams and management, particularly if design engineers are split over different locations.

There is an added bonus if using both Sigasi and ALINT-PRO, as Sigasi has an interface to ALINT-PRO which means that a full DRC can be executed whilst developing your code.

I’m feeling pretty good now as I’ve developed a rule policy based upon my coding guidelines and written some design constraints based on my target technology. I’ve even been looking at Jenkins (Continuous Integration environment) since the verification engineers go on about it so much, to the point where they’ve configured the regression testing to send them an SMS on simulation failure! I’ve managed to configure it to monitor the version control and launch ALINT-PRO each time there is a commit to run critical rules I set in the policy. It posts a report to keep track, so I’m pretty smug! Though I’m still trying to work out the SMS thing … anyway, I can start coding in Sigasi knowing all of this is in place and I haven’t even written any stimulus or assertions. I’m almost ready for that cigar now … but not quite …

I just mentioned assertions; these enable verification engineers to ensure that the designs intended behaviour matches the actual behaviour (assertions will be looked at in greater detail in a future article). There are great advantages of using/employing assertions, namely that they are very easy to read in order to interpret the intended function and they provide a common format across multiple tools. They have become instrumental in complex FPGA verification methodologies and are also used in formal verification.

Formal verification was very much a theoretical topic back in my ASIC days. Today however, the tools that prove or disprove intended behaviour based upon formal mathematical methods are very much a reality courtesy of OneSpin.

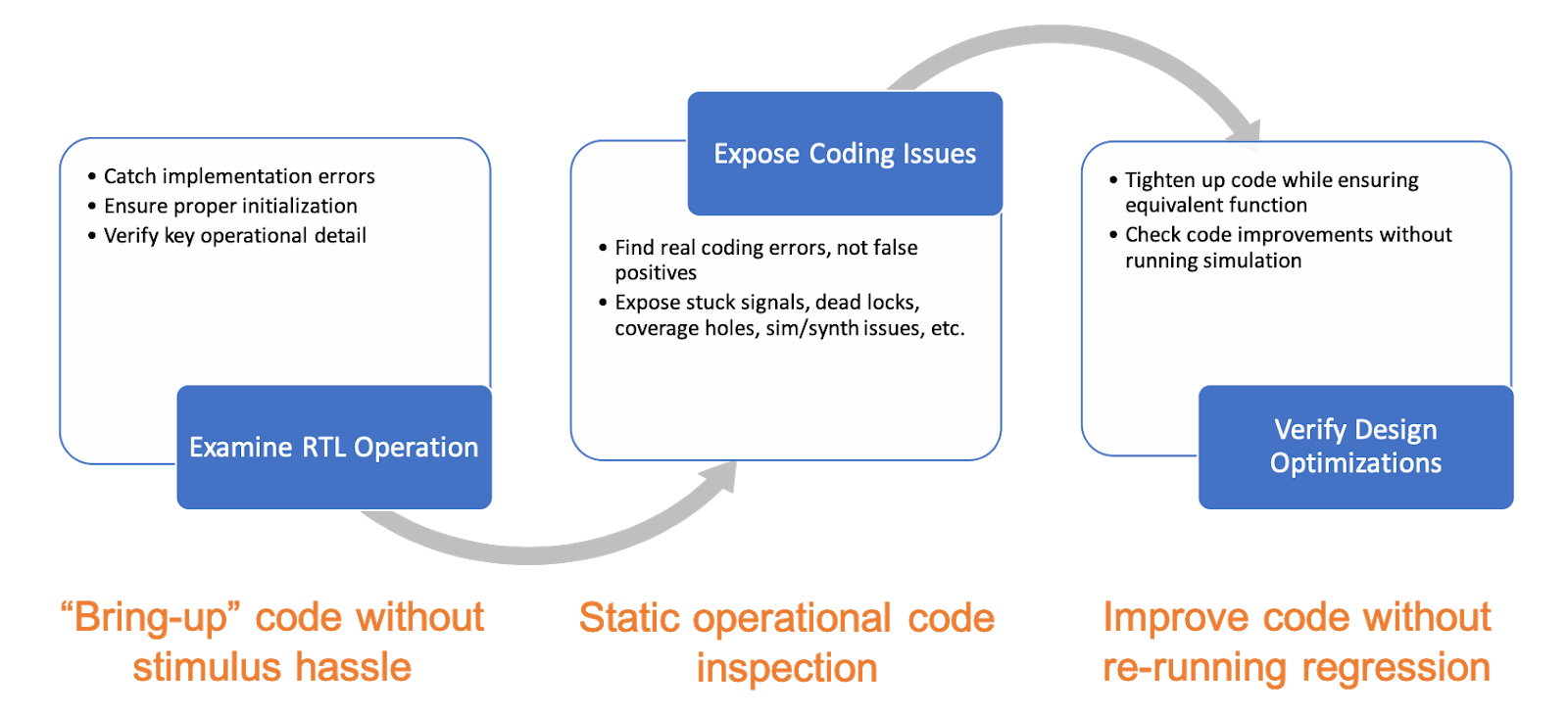

OneSpin have several products in the formal verification space but I am focusing on DV-Inspect since it’s a tool that fits my early methodology requirements.

DV-Inspect reads RTL code, examining the operation and can expose real coding issues using its proprietary proof engines.

Figure 6 – OneSpin DV-Inspect Analysis Flow

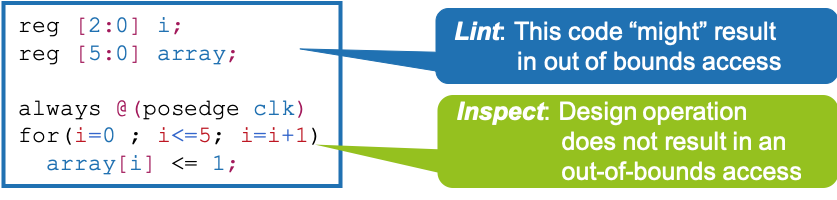

To be more clear on how DV-Inspect works, consider an example where a register is being used as an array to be addressed.

Running ALINT-PRO would point out potential out of bounds access based on code types. This means it would be unclear whether the array is really accessed out of bounds during code operation.

Figure 7 – DV-Inspect Code Example

DV-Inspect however, would either prove that access is never out of bounds or it will show a simulation trace from reset with a boundary violation.

Furthermore, any optimisation changes made to the RTL code – for example register optimisations or FSM re-encoding – can be verified with DV-Inspect using its sequential RTL to RTL equivalence checking, negating the need to re-simulate.

Because of the use of formal methods and creation of formal traces, DV-Inspect can create testbenches or write out assertions for simulation.

So not only am I confident I have solid, design operation checked code conforming to my internal coding guidelines, I have checked my clock & reset strategy and reset against my target technology and been able to generate a set of assertions ready for simulation.

Having a few tools for early design analysis in my methodology has addressed the goal of reducing uncertainty during the debugging phase of verification. It is likely that up to 80% of typical RTL coding mistakes can be found at no cost during the early design stages. It means that future projects can be planned more confidently; I get the view, the leg room and I know the management are happy as I don’t even have to buy my cigars anymore … anyone got a light?

https://info.firsteda.com/sigasi-studio

https://firsteda.com/products/aldec/alint/